Times are changing. Stuff is… harder. More complex. In particular, with the advent of generative AI, LLMs, and all those other words/abbreviations that basically mean “robots that know more than you, think faster than you, and can do stuff for you.” As we approach this new era of technology, I want to get practical. Robots are getting harder to distinguish from humans. They really are. Most of us are kinda just waiting to see how things shake out and there doesn’t seem to be a consensus as to how it will impact global economics. But I wanted to focus on one singular part of this for a second: robots are getting harder to distinguish from humans.

Is our world going to be Blade Runner or Cyclons from Battlestar Galactica? Not anytime soon. However, it’s getting much easier to impersonate a human online. In the near future, it will likely be imperceptible. This isn’t a hot take. This isn’t controversial. Why does it matter, though? As an analyst, why should I care?

Why it matters

While I mostly work in technical implementation, I have my hands in a little bit of everything – digital media, analysis, A/B testing, CDPs, etc. In every implementation – every. single. one. – I’ve seen a significant amount of easily-identifiable bot traffic adding noise to data. Now I’ve partnered with Dr. Fou and his amazing algorithm at FouAnalytics to scale the impact of these bots – but the question still lingers: what about bots that check all of the right boxes in any bot detection tool?

I haven’t come across a better algorithm – and there are some FANTASTIC cheat sheets out there that help you build segments to mitigate bot traffic; but you KNOW some squeak by. The question is this – do you think that number will increase or decrease? Do you think that in 5 years we’ll see fewer bots? No, I don’t think so – and I would LOVE to talk to folks who think that bots will become less of a problem.

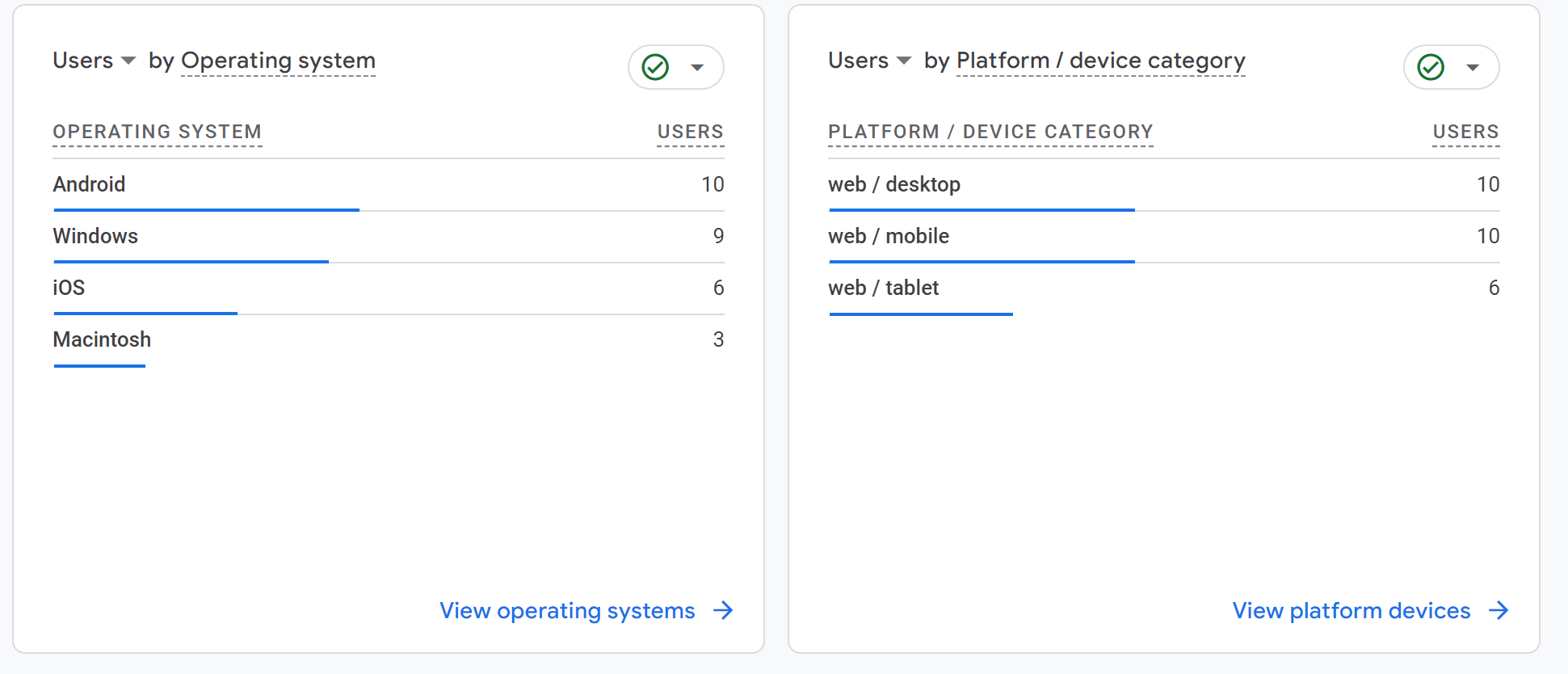

In fact, I went to ChatGPT to see if I could make one of my own. I don’t code in Python. I know how to run basic commands, but I am not an expert. In 5 minutes I had a bot up and running sending traffic to Google Analytics using free proxies it scraped from the internet. I cleared my cookies, left it running for a few minutes, and lo and behold! Traffic! Imperceptible traffic that is evenly-distributing its user agents between desktop, mobile, and tablet:

I didn’t send much traffic because I was just seeing if I could do it… from my crappy tablet (no, I didn’t even have a mouse or keyboard). This traffic LOOKED real – and there was no way to reliably block it. None. Now there was one consistent signal I could pick up, which was the 800×600 resolution, but I could have faked that, too… and, honestly, are you going to filter that traffic out?

This took 5 minutes.

Let me say that again. This took 5 minutes.

I won’t be posting the code for ethical reasons, but let me just say it was no more than 75 lines of code. So… great. This is an emerging problem. What the hell do you DO about it? Enter: the authenticated internet.

The authenticated internet

I’ve been latched onto this idea of the authenticated internet. I’m not talking about creating a login. Nope. That’s too easy. This was actually a concept back in 2012 or so – we wanted to collect user data at our ad agency. The hot topic at the time was collecting user accounts because user accounts = data… and data = money, right? Obviously this isn’t super sustainable. In fact, one of the top pages that gets hit on websites by bots is the login page (for obvious reasons).

So NOW what? We can’t stop bots from proliferating. We can’t stop them from creating fake accounts. There’s no accountability. The future of the internet is authentication. I don’t mean you hand over all of your personal information to a website. Nope. No one would agree to that. Instead, I’m suggesting there will be 2 internets – one for agents who can validate they are human and one for everyone else.

Boy, that sounds like a lot of work. Why would I be incentivized to validate that I am a human? Why would ANYONE buy into this thing? WELL I’M GLAD I ASKED! The answer is simple: money. (Isn’t that always the answer?)

Why anyone would do this

“So you’re telling me that I have to validate my actual identity to access the stuff I want to access on the website?”

YES! It will mean websites (read: publishers) will make much more money. This is actually happening right now in a relatively prohibitive way with news subscriptions. The problem is this:

Let’s say I have 2 websites that publish the same type of stuff. Let’s say they publish news. 2 major news sites. One has phenomenal data hygiene and the other does not. Both sell ads as a major source of income for their website. Since they both have similar BRAND equity, they both can sell ads for a premium. However, which one is probably making more money? If you guessed the one with bad hygiene, you’re right!

That’s not to say they don’t stop fraudulent traffic. They do! They stop what they can get away with and don’t worry about the rest. Where I’m going with this is that it’s hard – and it’s getting MUCH harder – to EMPIRICALLY prove that the traffic that is viewing the ads on your site are human.

If I’m an advertiser, I want to have assurance that the ads I am displaying are actually being viewed by people… not the bot Jim made in 5 minutes on ChatGPT cycling through proxies. Websites should earn money based on impressions of human traffic… and every day it becomes harder to identify a human. When do we hit critical mass?

Final Thoughts

To suggest people will willingly surrender their personal information to every website that wants authentication is naive. It won’t happen. Some database owned by the government or some government-regulated body will own that data and send some type of key that validates you’re a human. So yeah, you’ll have to tell someone; but that’s NOT unprecedented. That’s happening right now in states like Louisiana for people who want to view adult content. I briefly allude to this in a previous post.

This, in my opinion, is an inevitability. People are quickly realizing their ad dollars are being wasted on bots and fraud. There are more examples than I can count. Keep in mind, this method won’t completely stop fraud. I can still commit fraud in an app or website even if I know you’re a human via stuff like stacked ads, pixel stuffing, and loading webpages in the background of apps.

All this means is we need to change the way we think. This may not happen in the next 5 or 6 years. Maybe it’ll be 10 years. Eventually, major bot detection companies will get called out for being nothing but a security blanket. When investors pull back the sheets, they’ll see that we’ll need a scalable solution. That solution will be an authenticated internet.