There could be a major problem that very few people even know about: companies are likely paying for 90%+ non-human traffic. I will not name specific advertising platform; but I’ll say if you have any kind of media budget you’re using them (mostly display and social). We’re not talking about 1 platform – we’re talking about MOST major advertising platforms. Let’s address a few qualifying words I used: “could be a major problem” and “likely paying for non-human traffic”… I am using this because advertisers do not provide enough data to directly trace the traffic to the click. It’s physically impossible to get 100% conclusive proof (by design). However, disproving this would require insane assumptions across multiple major organizations.

Table of Contents

Background

We (Charlie Tysse, Sam Burge, and I at Search Discovery) were approached by a client to investigate major discrepancies between paid clicks and site traffic. To debug something like this, you have to break the process into small pieces because a lot can happen between the click and the page load. Here’s a rough flow:

There’s a lot that happens between each of these steps – and not every organization has each of them. These steps were broken down into a more granular checklist of things that needed to happen in order to be considered a valid session in the reporting tool. We tested multiple browsers, devices, proxies, and live ads to ensure data could reach the reporting tool. It all ran exactly as intended.

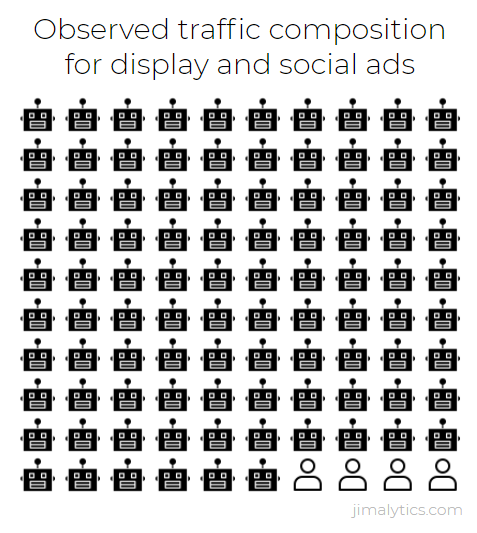

Then we checked the CDN logs and found bots… everywhere. If an ad saw 100 clicks, we might have seen 1,000 requests for the campaign URL. Bots are storing the target URL and hitting the destination page, which is why you’ll see more requests than clicks. We even saw a 1:1 ratio between clicks and what’s considered “monitored” traffic for one campaign. Monitored traffic is suspected bot traffic. That monitored traffic would get to the consent manager and that’s as far as they were able to be measured. We saw this result with nearly all paid campaigns – close to 99% of non-human traffic. This is an outcome of millions of advertising dollars spent over the past year. This is a huge problem for the company. Then we found another affected company. And another. Something’s wrong here.

If this is true, incalculable digital ad dollars are being spent on non-human traffic. Countries in the EU have consent managers that seem to be a tracking dead-end for these bots. It’s likely flying under the radar in the US.

Assumptions

Outlining assumptions is a very important step so we understand where we see (or don’t see) potential gaps. We’re assuming the following:

- More than 5% of users consent to tracking (average is well over 50%).

- CDNs are not accidentally blocking humans (their algorithms are more conservative to prevent this).

- Most users wait for the destination page to start loading (almost everyone would have to close the page before it starts to load).

- There is not some very obscure nuance to tracking that prevents data from going to the reporting tool (we tested/validated with multiple campaigns, browsers, devices, locations).

- Tools and platforms aren’t 100% effective at filtering bots (there’s only so much humans and algorithms can do).

- One of these assumptions fail for all 3 very large organizations (not likely).

I would be floored if any of these assumptions are false. The only way to validate these assumptions would be to get granular user agent and PII data from the advertising platform, which is not possible.

Why this matters

Maybe you skimmed the Background section where I said if this is true, “incalculable digital ad dollars are being spent on non-human traffic”. Let’s say you’re spending $100 on ads with the assumption that you’re paying $1 per click. That means you are spending $100 for 100 opportunities to capture a potential customer. Now if I told you that over 90% of the traffic is non-human, that means you’re spending $10 per human click. Better yet, if 99% of the traffic is non-human, that nets out to $100 per human click. Now imagine I’ve spent $10,000,000 on these ads and I’ve now found out I’m getting a small fraction of value out of that. This is a huge deal.

To be clear, I am not accusing anyone of ad fraud. I don’t have proof of ad fraud because of the assumptions above. However, we’re losing all of the value in the ad spend because “real” traffic is not making it to the website. This is much easier to identify in the EU. If you’re in the US, you may see an incredibly high bounce rate from some paid traffic sources.

Motive

Why is this happening? Are competitors sabotaging campaigns? Don’t be ridiculous. The truth is: I don’t know, but I do have some ideas. Don your tinfoil hat. All of this is speculative.

I believe it’s in the best interest of anyone who wants to undermine the trust in large, publicly traded tech company. Quite a few stocks on the NASDAQ get the majority of their revenue from advertising. If advertisers think they’re paying for bot traffic, they won’t buy ads. If people don’t buy ads, the tech firms don’t hit revenue targets. Less reliable revenue from ads? Bubble pops and stocks go down… way down. Why would this matter to anyone? I can think of a reason. This is extreme, but something like this could destabilize the entire economy.

Other than that, it’s hard to imagine benign crawlers that click on paid ads for fun. Maybe someone somewhere is testing for vulnerabilities. I’m not sure why it would be at this scale, though.

So what now?

First of all, how the hell are we seemingly the first ones to identify this? Interestingly, we discovered this right around the time Lukas Oldenburg published a very timely article about Bot Analysis and Filtering. What we’re learning now is the scale of the problem. Advertising platforms do not want bots. They want you to have the highest quality traffic. It’s in their best interest. The higher the quality of traffic, the more money you’ll spend with them. Maybe we’ve reached an inflection point with bots. Maybe they’re now sophisticated enough to get through fancy algorithms. There are humans behind both the aggressors and the defenders. Anything is possible.

The intent of this article is to encourage you to take the steps we took to validate traffic from your advertising platforms. In the US, that might be difficult. In the EU, it seems they aren’t motivated to get past the consent manager (for now). Collect data from your CDN to see how much traffic is being monitored. If you can, see if you can get granular data from your consent manager. I’d like to take a page from Lukas and suggest that we may reach a point where our job as analysts will be focused just on authenticated traffic – people – instead of anonymous traffic.

In an effort to show how bots can be created and deployed to deliver artificial traffic, I’m teaming up with a colleague Blake Fraley to compete in an “arms race” where he’s building a bot and I’m trying to prevent it from recording fraudulent traffic. The plan is to eventually attempt to mimic a click on an ad platform in a controlled environment. Bot traffic has evolved immensely over the past decade and can be deployed at scale with mini-computers like the Raspberry Pi. With only modest funding, I think building an army of sophisticated bots is absolutely possible.

What a good read! I think an underrated point you made is about looking at authenticated traffic. This is huge for companies who want to know their digital spend matters. Goal is to drive account creation or email sign-ups rather than clicks, etc. After reading this, the best recommendation I can think of is around authenticated users. What a great idea!